We have reached a bizarre inflection point where the very people tasked with explaining AI are becoming its most public victims. This week, the tech world was rocked by news that a veteran Ars Technica reporter was let go following the discovery of AI-fabricated quotes in an article, while halfway across the globe, an Indian judge faced the wrath of the Supreme Court for citing non-existent, AI-generated legal orders. It is a sobering reminder that while LLMs are being pushed as productivity boosters, they are simultaneously eroding the foundational trust of our most critical institutions.

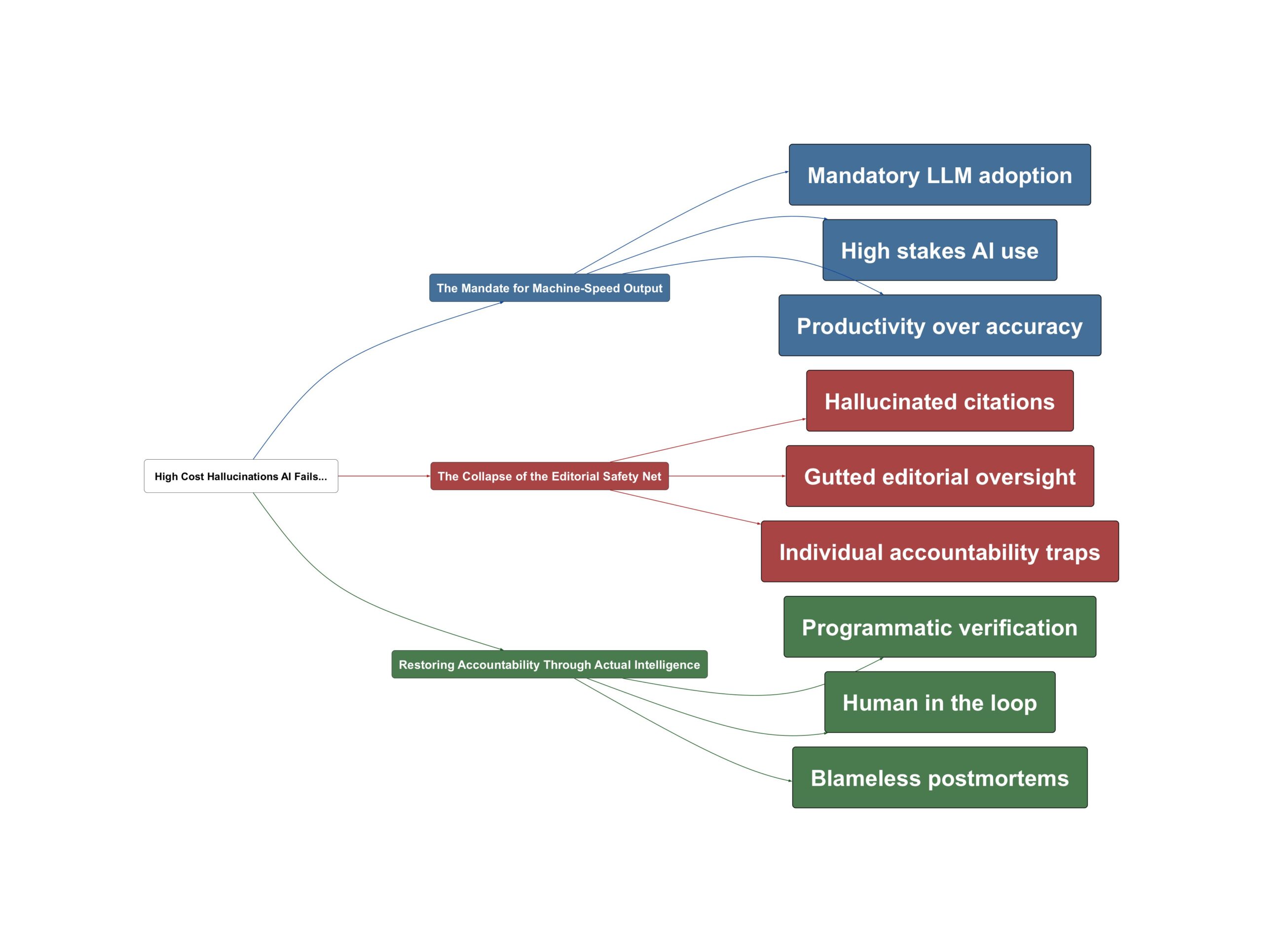

The Mandate for Machine-Speed Output

The current landscape is defined by a frantic push to integrate large language models into every professional workflow. As several users on Hacker News pointed out, this isn’t always a bottom-up choice by enthusiasts; it is often a top-down mandate. One commenter noted that it is an “open secret” that even large news outlets now mandate LLM use to maintain volume. This push to do more with less has created a situation where efficiency is prioritized over the slow, methodical work of traditional verification.

It also comes at a moment in which many media bosses are pushing staff to find uses for AI — as are executives across most industries.

In the legal sector, the pressure is equally high, with backlogs of cases driving a desperate search for automation. However, the transition from “useful assistant” to “untrusted source” happens faster than most organizations are prepared for. We are seeing a global trend where institutions respond to these new technologies with unrealistic expectations of accuracy, often ignoring the inherent nature of next-token prediction.

The Collapse of the Editorial Safety Net

The real complication isn’t just that an AI lied—it’s that the systems designed to catch those lies have been hollowed out. The community reaction highlighted that newsrooms and legal offices have spent a decade cutting the very staff—fact-checkers, copy editors, and senior clerks—who would traditionally catch a fabricated quote or a fake case citation. When you remove the verification layers, the individual is left alone with a tool that is fundamentally designed to be plausibly wrong. As one HN user succinctly put it:

Newsrooms have been cutting editorial staff for a decade, which means the verification layers that would have caught this… largely don’t exist anymore.

This creates a dangerous feedback loop. Junior practitioners or overworked veterans, like the reporter who claimed to be working through a fever, use AI to summarize or extract data, only for the tool to “hallucinate” details that look perfectly legitimate. The tragedy of the Ars Technica incident is the irony: a senior AI reporter falling for the very failure mode he should have been most skeptical of. It raises the uncomfortable question: if the experts are getting fooled, what hope does the average professional have?

Restoring Accountability Through Actual Intelligence

Resolving this crisis requires moving beyond individual blame and toward systemic technical solutions. While firing a reporter or reprimanding a judge provides a quick PR fix, it doesn’t solve the underlying architectural risk of using LLMs. The most practical path forward involves programmatic verification. One commenter shared a robust solution used in their legal work: dumping AI transcripts into a script that hits official databases to cross-reference case IDs and party names before any text is ever published.

The high court also advocated for the exercise of actual intelligence over artificial intelligence.

We must also embrace the concept of “blameless postmortems” borrowed from the software industry. Instead of simply discarding the human, organizations need to rebuild their editorial protocols. This means treating AI output as radioactive material that requires heavy shielding and multiple layers of manual verification. In practice, this looks like mandating original source links for every quote and using AI only for drafting—never for fact-retrieval. Ultimately, the industry must decide if the speed gains of AI are worth the potential collapse of institutional credibility.

💡 Key Takeaway: AI output must be treated as toxic until programmatically or manually verified against primary sources.

- Ars Technica fires reporter after AI controversy involving fabricated quotes

- India’s top court angry after junior judge cites fake AI-generated orders