The landscape of artificial intelligence is shifting from models that simply talk to models that actually do. We are witnessing a transition from passive chatbots to active agents capable of navigating complex tasks. But how exactly are these agents evolving, and what does it mean for the future of productivity? The shift from simple text generation to functional action is fundamentally changing the way we interact with technology.

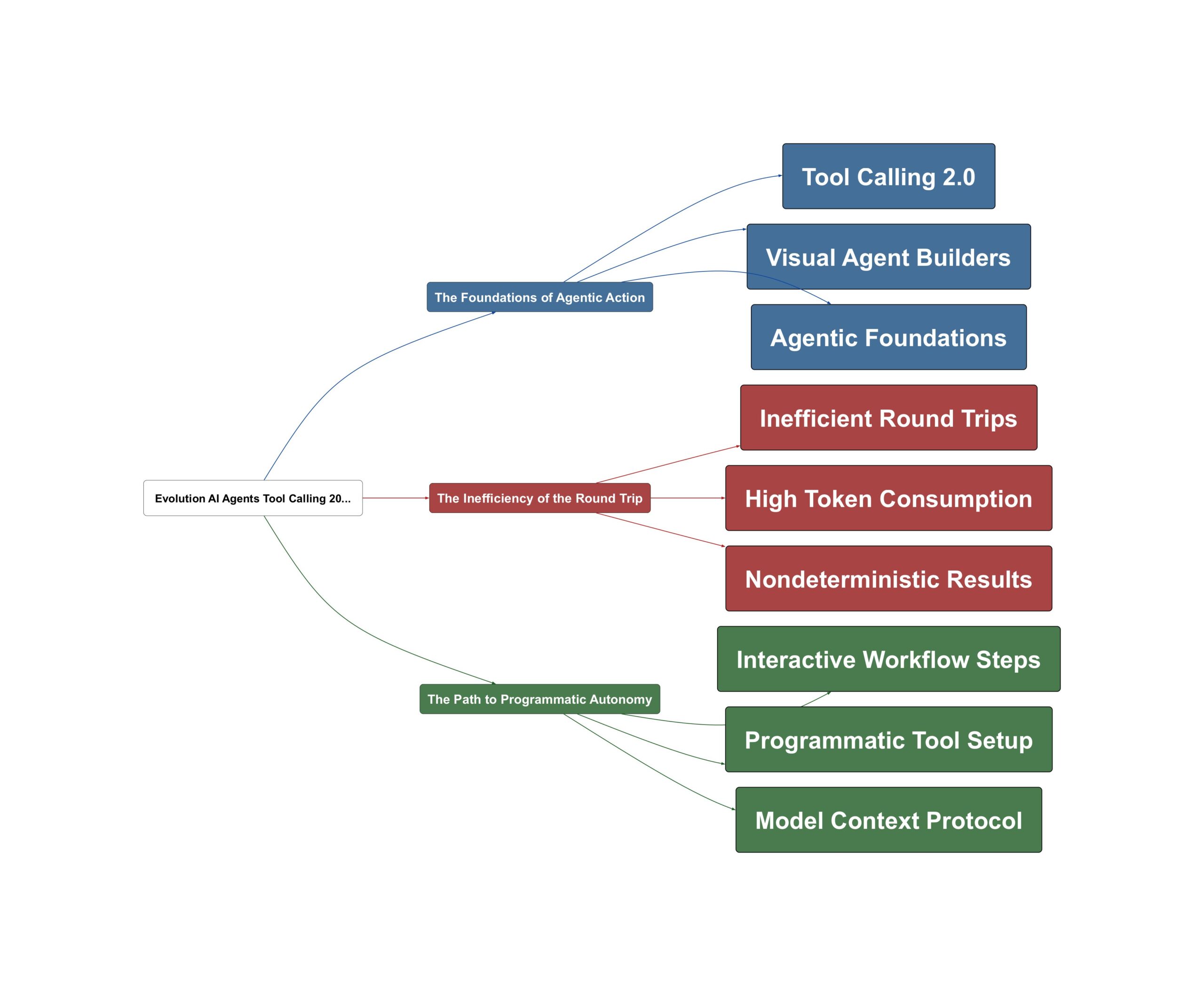

The Foundations of Agentic Action

For the past two years, the bedrock of AI agents has been a process known as tool calling. As AI Jason explains, this mechanism “transform large language model from outputting pure text to outputting a specific JSON that can be used to invoke an API or functions.” This capability allows models to bridge the gap between digital thought and real-world action. Building on this, Google has introduced Opal, a no-code visual builder designed to democratize these complex systems. According to Sam Witteveen,

Google is basically trying to work out what are the key things that people actually want to use agents for

— Sam Witteveen

This democratization represents a significant milestone, effectively bringing agentic power to the average user through drag-and-drop interfaces that allow for the definition of specific rails and workflows.

The Inefficiency of the Round Trip

However, as we push agents toward more complex, long-running tasks, the cracks in the traditional framework begin to show. The current process often feels like a tedious “ping-pong” match between the model and the server. AI Jason points out that for complex tasks,

we’re basically purely relying on large lang model to generate the parameters for each function.

— AI Jason

This reliance often leads to significant waste within the token context window. Imagine an agent searching through emails: it must fetch an ID, return it, then use that ID to fetch content, repeating this loop dozens of times. “Here we are relying on large range model to regenerate the ID exactly same,” notes AI Jason, highlighting a fragility that can lead to non-deterministic errors. Sound familiar? We are essentially asking sophisticated brains to perform repetitive manual labor, leading to massive overhead and potential failures when the model loses track of specific identifiers.

The Path to Programmatic Autonomy

The solution lies in a more integrated approach to agent design. Anthropic’s recent updates, which represent a “Tool Calling 2.0,” aim to streamline these complex tasks by reducing the friction of the round-trip process. Simultaneously, Google Labs is evolving its tools to handle more than just static paths. Here’s the key insight: we are moving toward interactive, step-based autonomy. Sam Witteveen observes that

they’re introducing this new agent step that turns static workflows into interactive

— Sam Witteveen

experiences. By combining programmatic tool setups with interactive steps, developers can create agents that are both more reliable and more efficient. The new action plan for agent builders includes:

- Programmatic Setup: Hard-coding specific parameters to reduce model hallucination and token waste.

- Interactive Steps: Turning static workflows into dynamic, user-responsive experiences.

- Context Optimization: Minimizing the ping-pong effect to keep the context window focused on the task.

The bigger picture? As Sam Witteveen highlights,

as the models are getting better, the way that you actually build agents with frameworks and harnesses is dramatically changing as well.

— Sam Witteveen

This shift ensures that agents are not just following a script, but are dynamically navigating complex digital environments with precision and efficiency.

💡 Key Takeaway: Next-gen AI agents move beyond basic tool calling toward programmatic, interactive autonomy.

Video Sources